Visualization plays an important role in understanding and in knowledge advancement on base of empirical data. In initial stages of research of such type, visualization helps to explore the data interactively, to get an overview and to create meaningful hypotheses; in later stages, it helps to control and to steer partially automated analyses; and in final stages, where fully automated data analysis procedures are available, it provides summaries of the results that foster our understanding. Visualization is also instrumental in design, being it e.g. the design of pharmaceutically active compounds, the design of objects, or the planning of clinical therapies.

The Visual and Data-centric Computing department develops tools for extracting relevant information from data sets such as time series, spatiotemporal data and high-dimensional data. The data may result from observations or simulations, they may cover single or multiple length and time scales, they may be crisp or unsharp and they may be complete or incomplete. We are interested in application-relevant structures hidden in the data; to reveal these, we develop methods for their identification, extraction, classification, quantification and visualization. Only in simple cases, the structures have a purely mathematical character; typically, their modeling requires domain knowledge and consideration of specific data characteristics as well as analysis questions.

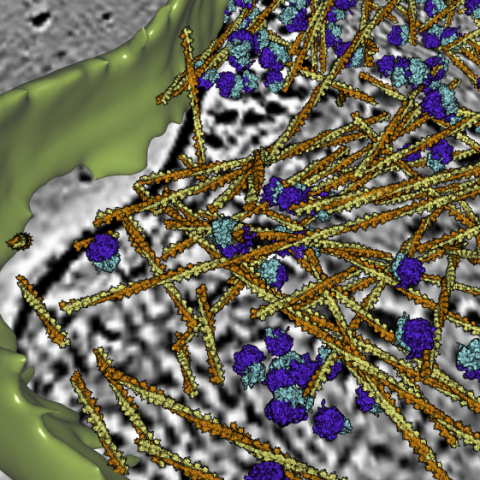

Therefore all our research projects are embedded into applications. We work on problems in biology, biophysics, neuroscience, medicine, materials science, fluid mechanics, meteorology and climate research. Furthermore, we develop solutions for simulation-based therapy planning to advance individualized medicine.

For the development of practically useful analysis systems, we develop, adapt and combine methods of data analysis, image analysis, geometry processing, computer graphics, data visualization, machine learning and human-computer interaction.

The software solutions are interactive, semi-automatic or fully automatic, depending on factors such as the complexity of the analysis tasks, the degree of automation attainable and the size of the data sets to be analyzed. This flexible approach enables us to solve complex tasks that cannot be fully automated and that require the human in the loop.

The developed research software is made available to application partners, with whom we collaborate to clarify the analysis tasks, to evaluate the quality of the algorithmic solutions and to improve them. Mature solutions are transferred to industrial partners, who integrate these into products and distribute them.